AI Coding Agents Face Critical Security Risks Without Isolated Execution Environments, Experts Warn

Summary

AI coding agents face serious security vulnerabilities as experts warn that without isolated execution environments, prompt injection attacks can manipulate agents into running malicious code that steals credentials, deletes data, or compromises connected services.

Key Points

- Agentic systems are increasingly adopting coding agent patterns, where AI reads filesystems, runs shell commands, and generates code, creating serious security risks when all components share the same trust context.

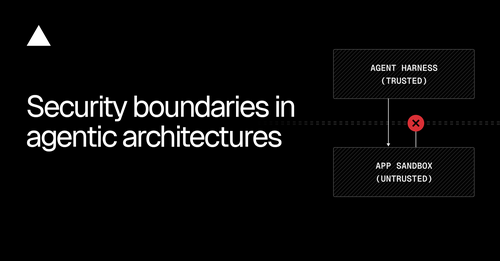

- Prompt injection attacks can manipulate agents into executing malicious generated code that exfiltrates credentials, deletes data, or compromises connected services, making it critical to separate the agent harness, generated code execution, and secrets into distinct security contexts.

- The strongest recommended architecture runs the agent harness on standard compute with Fluid compute optimization, executes generated code in ephemeral isolated sandboxes, and uses secret injection proxies so credentials are never directly accessible to untrusted generated programs.