AI-Powered Brain Interfaces Decode Inner Speech, Emotions, and Mental Images in Real Time

Summary

Groundbreaking AI-powered brain interfaces are now decoding inner speech, emotions, and mental images in real time, with Stanford researchers achieving 74% accuracy translating paralyzed patients' imagined sentences into text, while scientists worldwide push further into reconstructing sounds, dreams, and emotional expression directly from brain activity.

Key Points

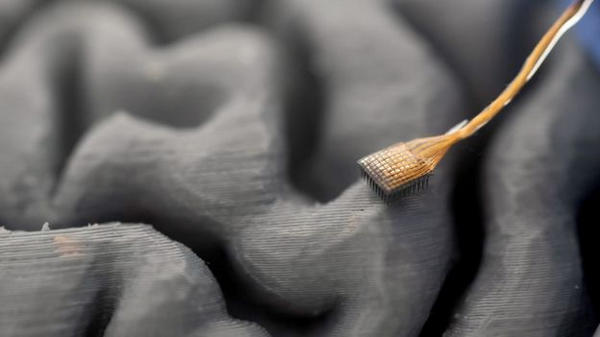

- Stanford University researchers successfully decode real-time inner speech from a paralyzed woman using surgically implanted electrodes and AI, achieving up to 74% accuracy in translating imagined sentences into on-screen text.

- Scientists at the University of California, Davis push brain-computer interface technology further by decoding not just words but also non-verbal speech elements like pitch, intonation, and rhythm, allowing ALS patients to communicate with emotional expression.

- Researchers in Japan advance 'mind captioning' by combining AI tools with non-invasive brain scans to reconstruct images, audio, and potentially dreams that people see or hear in their minds, opening doors to understanding psychiatric conditions and animal perception.