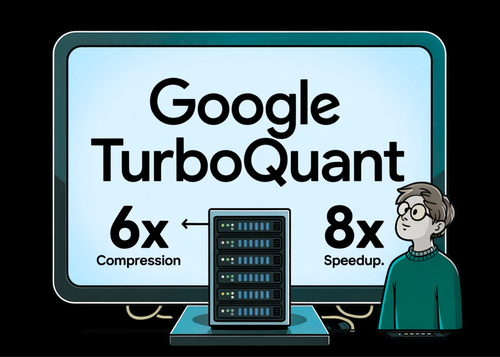

Google's TurboQuant Slashes LLM Memory by 5x and Boosts Speed 8x With No Accuracy Loss

Summary

Google's TurboQuant is revolutionizing AI efficiency, slashing large language model memory usage by over 5x and boosting speed up to 8x with zero accuracy loss, using a data-oblivious quantization algorithm requiring no dataset-specific tuning — maintaining perfect retrieval accuracy across 104,000 tokens in benchmark tests.

Key Points

- Google's research team introduces TurboQuant, a data-oblivious quantization algorithm that compresses LLM Key-Value cache memory by over 5x and delivers up to 8x speedup with zero accuracy loss, requiring no dataset-specific tuning or calibration.

- TurboQuant achieves near-theoretical optimal distortion rates by applying random rotations to input vectors, enabling efficient scalar quantization per coordinate, and uses a two-stage approach combining MSE optimization with a 1-bit QJL transform to provide unbiased inner product estimates critical for transformer attention mechanisms.

- In benchmarks using Llama-3.1-8B-Instruct and Ministral-7B-Instruct, TurboQuant maintains 100% retrieval accuracy on the Needle-In-A-Haystack test up to 104k tokens under 4x compression, while reducing vector database indexing time to virtually zero compared to hundreds of seconds required by traditional Product Quantization.