Google Research's TurboQuant Slashes AI Memory Use by 6x While Boosting Performance 8x

Summary

Google Research's new TurboQuant algorithm is revolutionizing AI efficiency, slashing large language model memory usage by 6x and boosting performance 8x without compromising output quality, using a two-step compression process that works on existing models with no additional training required.

Key Points

- Google Research unveils TurboQuant, a new AI-compression algorithm that reduces large language model memory usage by up to 6x while delivering an 8x performance boost without sacrificing output quality.

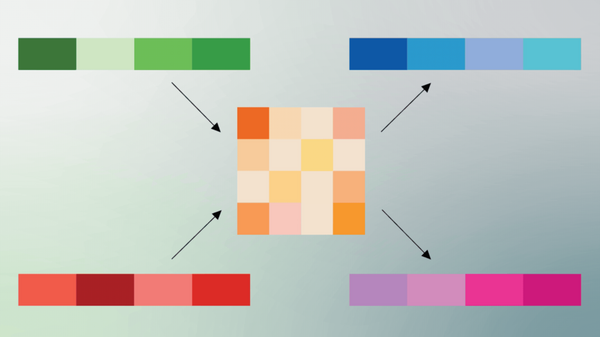

- TurboQuant works in two steps: PolarQuant converts vector data into polar coordinates for efficient compression, while Quantized Johnson-Lindenstrauss applies a 1-bit error-correction layer to preserve accuracy in the model's attention scoring process.

- Testing on Gemma and Mistral open models shows perfect downstream results with the key-value cache quantized to just 3 bits and no additional training required, making TurboQuant especially promising for mobile AI applications.