AI Compute Surges 1 Trillion Times Since 2010, Costs Plummet 900x as Human-Level Agents Near Reality

Summary

AI training compute has exploded 1 trillion times since 2010, while deployment costs have collapsed 900x annually, and with global capacity forecast to hit 100 million H100-equivalents by 2027, human-level autonomous AI agents capable of handling complex, multi-week tasks are rapidly approaching reality.

Key Points

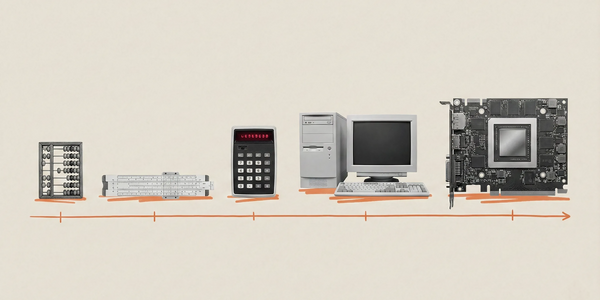

- AI training compute has grown by 1 trillion times since 2010, driven by faster chips, high-bandwidth memory, and massive GPU clusters connecting hundreds of thousands of processors into warehouse-scale supercomputers.

- Software efficiency gains are cutting the compute required to reach fixed performance levels in half every eight months, causing AI deployment costs to collapse by up to 900x annually, making AI radically cheaper and more accessible.

- Global AI compute capacity is forecast to hit 100 million H100-equivalents by 2027, potentially delivering another 1,000x in effective compute by 2028, paving the way for near human-level autonomous AI agents capable of managing complex, multi-week tasks.