Most Self-Hosted LLM Users Are Wasting Their Local AI's True Potential

Summary

Millions of self-hosted LLM users are squandering their local AI's true power by treating it like a basic chatbot, when it can instead serve as a silent, privacy-first backend engine capable of automating workflows, controlling smart home systems, and managing entire tasks autonomously without cloud dependency or rate limits.

Key Points

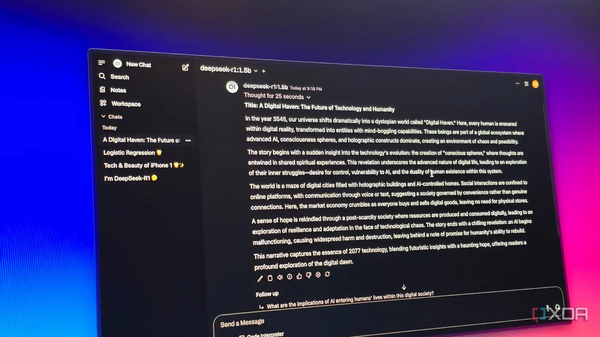

- Most self-hosted LLM users fall into a 'ChatGPT clone' trap, limiting their powerful local models to basic chat interfaces instead of leveraging their full capabilities as integrated backend engines.

- Self-hosted LLMs offer uncompromising data privacy and deep system integration, allowing users to connect models directly to file systems, local scripts, and apps without cloud API calls, rate limits, or restrictive safety filters.

- By treating local LLMs as silent background engines rather than chat tools, users can automate knowledge management, control smart home systems via natural language, and deploy autonomous agents like AgenticSeek to handle entire workflows without human prompting.