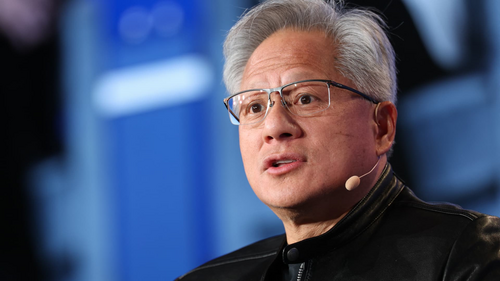

OpenAI Co-Founder Ilya Sutskever Reveals $7 Billion Stake During Musk vs. OpenAI Trial

OpenAI co-founder Ilya Sutskever reveals a $7 billion personal stake in the AI startup during testimony in the ongoing Elon Musk vs. OpenAI trial, making him one of the company's largest individual shareholders.