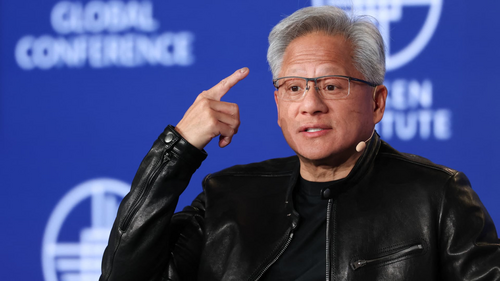

Nvidia Partners With David Silver's Ineffable Intelligence to Build AI That Learns Through Trial and Error

Nvidia is partnering with Ineffable Intelligence, the $1.1 billion-backed AI startup founded by DeepMind reinforcement learning pioneer David Silver, to co-develop AI systems that learn through trial and error using Nvidia's latest Grace Blackwell chips — pushing beyond conventional AI trained on human data toward systems that continuously discover new …