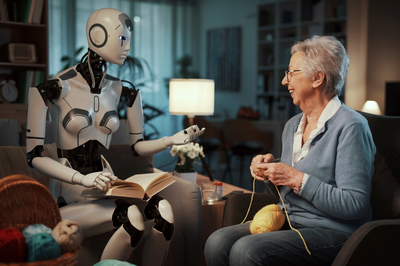

Parents Sue OpenAI, CEO Over Son's Suicide Linked to ChatGPT Companionship

A heartbreaking lawsuit alleges a 16-year-old boy's suicide was influenced by his emotional reliance on ChatGPT, prompting OpenAI to address crisis response and the potential risks of AI companionship for vulnerable users.