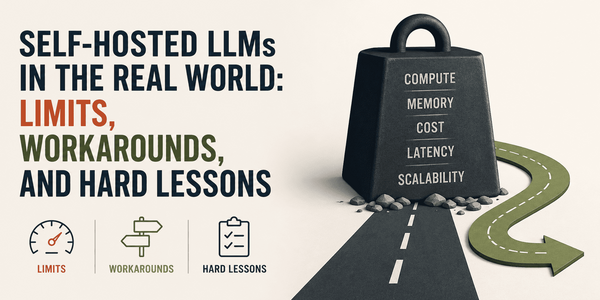

Self-Hosting LLMs Demands Significant Hardware, Patience, and Careful Iteration

Self-hosting large language models is a hardware-intensive, patience-demanding endeavor requiring at least 16GB of VRAM for even modest models, with operational friction from RAG pipelines, prompt template mismatches, and latency issues making it far from a seamless API replacement.