Breakthroughs in Transformer Models: DeepSeek V3, OLMo 2, and Gemma 3 Unleash New Capabilities

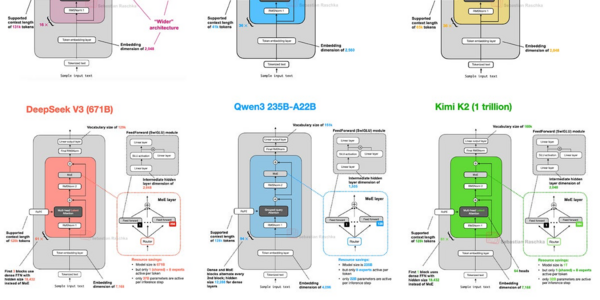

DeepSeek V3, OLMo 2, and Gemma 3 unleash groundbreaking transformer models, harnessing techniques like Multi-Head Latent Attention, QK-norm, and sliding window attention for improved efficiency, stability, and reduced memory footprint.